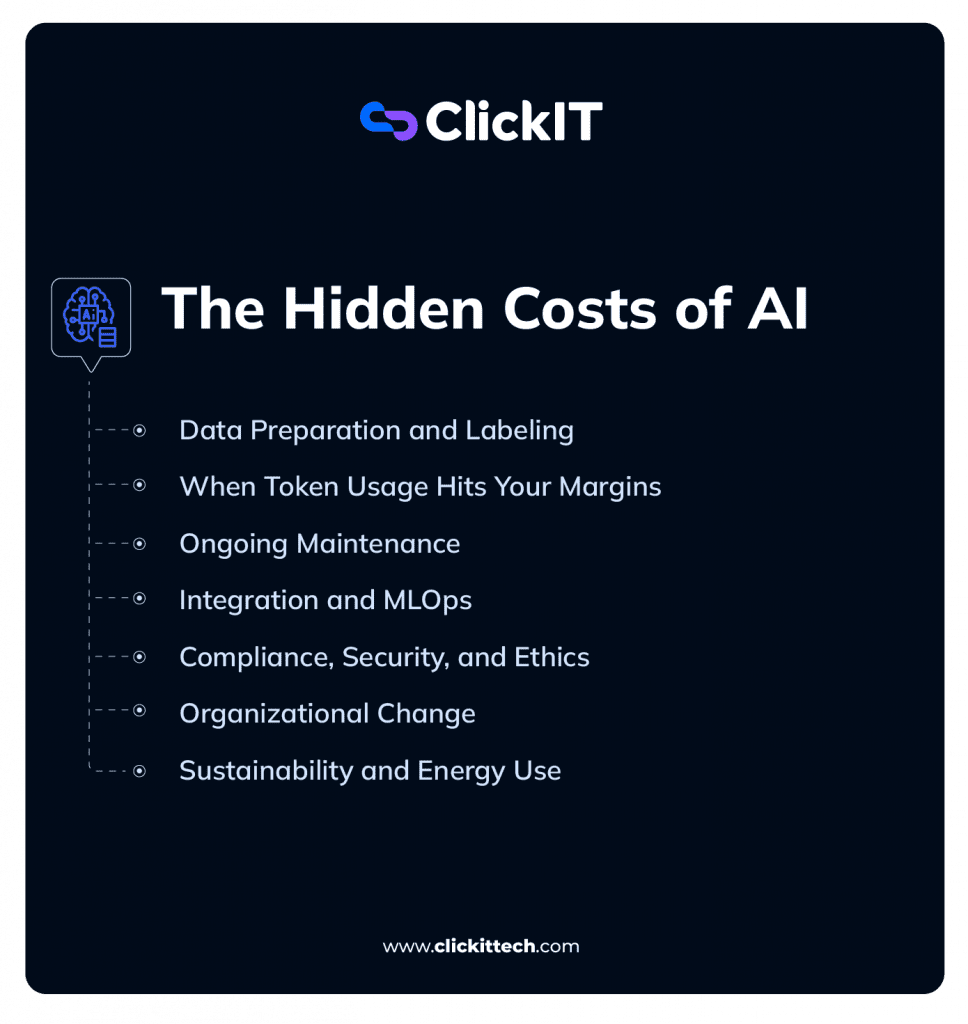

What if your most promising AI initiative is quietly morphing into your biggest operational liability? This is where The Hidden Costs of AI begin to surface, not in the AI itself, but in the infrastructure required to keep it afloat.

Most organizations dive headfirst into AI because of the “magic” of a successful demo. The initial API charges are reasonable, and the initial success is just plain amazing. But as you move from a small group of users to a full-scale rollout, the complexity escalates.

Now, token usage is through the roof, cloud prices are stratospheric, and your engineering team is busy with maintenance cycles rather than working on new product features.

Each leader in the organization feels this pressure from a different angle:

- The CEO is looking at the margins. Will this actually drive growth, or are we just subsidizing an expensive experiment? If you don’t account for The Hidden Costs of AI, you risk eroding the very margins you intended to expand.

- The CTO is worried about “black box” architecture and vendor lock-in. How do we scale without becoming entirely dependent on a single provider’s pricing whims?

- The COO sees the friction on the ground. Is AI actually streamlining the workflow, or is it just introducing new, unpredictable ways for things to break?

- The VP of Engineering is catching the fallout, fighting model drift, managing massive vector databases, and losing sleep over “infinite loops” in AI agents that could drain a budget in a weekend.

The hard truth is that these costs are systemic. They aren’t “bugs”, they are part of the ecosystem. If you don’t plan for data validation layers, observability tools, and the 20% annual “tax” for retraining and compliance, you aren’t building a product, but rather a prototype that will collapse under its own weight.

The Hidden Costs of AI Systems are only “hidden” if you aren’t looking at the full lifecycle of the technology.

To help you see the full picture, we’re breaking down the hard realities every tech leader needs to plan for.

We’ll look at why AI infrastructure and cloud bills tend to explode faster than expected, and why cleaning your data usually ends up being your biggest upfront cost. We also dig into the “unspoken” costs: the 15–20% annual budget you’ll need just for maintenance and retraining, plus the extra 10–20% “governance tax” that comes with regulated industries.

Without features like model cascading and financial circuit breakers, AI can easily eat into your margins instead of growing them.

Blog Overview

| Sr. No. | The Core Challenge | The Financial Reality |

| 1 | Data Preparation and Labeling: Your Biggest Early Expense | Messy data isn’t just a quality problem; it’s a budget leak that forces your best engineers to spend weeks on manual cleanup. |

| 2 | Cloud Infrastructure: Where Token Usage Hits Your Margins | Using a massive “Frontier” model for every basic query is like using a Ferrari to deliver the mail; it’s overkill and a fast way to go broke. |

| 3 | Ongoing Maintenance: Why AI Systems Decay Without Discipline | The moment your AI hits the real world, it starts to “rot,” which is why you need a 20% annual buffer just to keep the lights on. |

| 4 | Integration and MLOps: The Reality of Running at Scale | Launching is just Day One; the real work is managing the memory-hungry “plumbing” that keeps a probabilistic system from falling apart. |

| 5 | Compliance, Security, and Ethics: The Risk Multiplier Few Teams Budget For | In regulated industries, expect a “governance tax” of up to 20% to keep your model from becoming a legal or reputational liability. |

| 6 | Organizational Change: Technology Is Only Half the Equation | If your team doesn’t trust the tool or understand how it changes their daily workflow, your productivity gains will never materialize. |

| 7 | Sustainability and Energy Use: The Environmental Cost Behind the Compute | Scaling without an efficiency plan is a double hit: your cloud bills skyrocket just as your carbon footprint becomes a board-level liability. |

| 8 | The AI Cost Management Framework: Moving from Hype to Financial Discipline | AI projects don’t fail because the models are too expensive; they fail because financial “seatbelts” weren’t baked into the architecture from the start. |

We’re starting with the “Data Trap,” showing you how a simple quality firewall stops you from wasting money on useless tokens. From there, we’ll get into the economics of Model Cascading; the art of letting efficient models handle the grunt work so you aren’t overpaying for “Frontier” reasoning when you don’t actually need it.

Why Data Preparation and Labeling are Your Biggest Early Expense?

In the early days of an AI project, the real money is burned on the clock, specifically the hundreds of hours your team spends cleaning, labeling, and wrestling with data.

Before a single prompt hits production, your engineers are likely spending weeks defining labeling standards. To understand why budgets spiral, we have to look at the first place The Hidden Costs of AI take root: the data itself.

But here’s the kicker: with LLMs, poor data isn’t just a “quality” problem. It’s a financial one. Messy data leads to longer prompts, unnecessary retries, and failed fine-tuning runs. At scale, a “small data issue” is just a polite way of saying “a massive budget leak.”

Why You Need “ETL for LLMs”?

We need to move past the old-school idea of ETL (Extract, Transform, Load) that we used for basic analytics. Production AI requires a much tighter loop. I call this ETL for LLMs.

It’s not just about cleaning: it’s about structured validation.

Think of it as a quality firewall. By using tools like Pydantic for runtime schema enforcement at the application layer, and Great Expectations for upstream data pipeline validation, you create a two-stage quality firewall

- Schema Enforcement: If your model expects a specific JSON format but the data source sends a broken string, a validation layer kills that request immediately. This prevents “hallucination retries” that inflate your token usage.

- Fine-Tuning Protection: Feeding malformed data into a fine-tuning pipeline is like pouring salt into a car’s engine. You’ll pay for the compute, but the resulting model will be useless.

Labeling: The Human Cost Multiplier

Then there’s the labeling. You can’t just outsource this to anyone, but you often need domain experts to define the logic and review the quality loops. In regulated industries, this isn’t just expensive, but it’s a bottleneck.

The Bottom Line: If you treat data prep as a “task” rather than core infrastructure, your project will stall. Not because the model is bad, but because you tried to build a high-performance system on a weak foundation. Validation isn’t just “data hygiene”, it’s your primary tool for cost control.

How does Token Usage in Cloud Infrastructure Hit Your AI Margins?

AI is deceptively cheap in pilot mode. When you only have five people testing a tool, the API bill is basically a rounding error. But at scale, AI stops being a “cool feature” and starts being a massive cloud economics problem.

As your traffic grows, token consumption doesn’t just increase, but it compounds. Every retry, every embedding, and every long-winded system prompt adds up. If your architecture defaults to a high-end “Frontier” model for every single request, you are essentially using a Ferrari to deliver mail. It’s overkill, and it’s a fast way to burn through your budget.

This is exactly where most organizations lose their grip on spending. Even if your data is perfect, you still have to deal with the physics of the cloud. This is where The Hidden Costs of AI Systems move from the engineering desk to the monthly invoice.

Read our blog on AWS Pricing

Model Cascading: Don’t Overpay for Intelligence

The secret to scaling without going broke is Model Cascading.

Instead of treating every prompt the same, you introduce a routing layer that assigns tasks based on the complexity required.

- Small Language Models (SLMs):

Use Small Language Models (SLMs), models ranging from roughly 2B to 13B parameters, for the ‘grunt work.’ Models like Mistral 7B, Phi-3 Mini, or Gemma 9B are incredibly efficient at intent detection, basic summarization, or structured extraction - Frontier Models:

You “escalate” to the big models only when you need heavy-duty reasoning, complex multi-step analysis, or high-stakes creativity.

Think of it as tiering your intelligence. Most enterprise traffic consists of repetitive, low-complexity queries. You don’t need the world’s most advanced AI to tell a user where their shipping label is.

The Strategic Reality: Controlling cloud costs isn’t about using AI less. It’s about using the right model for the right task. Model Cascading turns AI from a “black box” expense into a scalable system that actually respects your margins.

The Embedding Tax: Every document you index and every query you process requires a call to an embedding model. At scale, this becomes its own line item. Consider using open-source embedding models (e.g., nomic-embed-text, bge-m3) hosted on your own infrastructure to reduce this cost by 70–90% compared to third-party APIs.

Ongoing Maintenance: Why AI Systems Decay Without Discipline?

One of the biggest traps in AI is the “set it and forget it” mentality. In reality, the moment an AI system hits production, it begins to rot. This isn’t because the code is buggy; it’s because the world around the model is constantly moving.

We call this Drift, and it’s a silent performance killer that usually shows up in two ways:

- Data Drift: This happens when the actual data coming in starts looking different than what the model was trained on.

- Concept Drift: This is even trickier. It’s when the “meaning” of the data changes because of new business contexts or regulations.

The real danger here isn’t a spike in your cloud bill, it’s silent user churn. If a customer gets three “hallucinations” or flat-out wrong answers in a row, they won’t file a ticket. They’ll just stop using the tool. Without loyalty metrics and real-time tracking, you won’t even notice your AI is failing until your adoption numbers bottom out.

Observability is Your “Operational Insurance”

To stay ahead of this, you need a strategy for Continuous Training (CT). Monitoring isn’t a “nice-to-have” dashboard for your DevOps team; it’s how you protect the integrity of your product.

This means you need eyes on everything: real-time input patterns, output quality scoring, and version control for your prompts. A “tiny” tweak to a system prompt can have massive downstream consequences that you’ll only catch if you’re actually looking.

The 20% Rule for Upkeep

You should budget roughly 15–20% of your initial AI investment each year just to keep the lights on. This “upkeep tax” covers everything from re-indexing embeddings to compliance audits and the inevitable retraining cycles.

The Strategic Reality: The Strategic Reality: AI performance is never static. In fact, The Hidden Costs of AI Systems often peak during the maintenance phase because intelligence naturally decays the moment it hits the real world.

Integration and MLOps: The Reality of Running at Scale

There’s a common trap where teams celebrate “shipping” an AI feature like it’s the finish line. In reality, that’s just Day One of the real work.

Traditional software is predictable; it follows the rules you write. But AI is probabilistic, it’s “fuzzy.” This means your standard deployment pipeline isn’t enough. You need an entirely different layer of MLOps just to manage the guardrails, the logging pipelines, and the inevitable “why did the model just say that?” debugging sessions.

The Hidden Infrastructure Tax: Vector Databases

If you’re building anything that needs to “know” your company data, like a support bot that scans product manuals, you’re likely using RAG (Retrieval-Augmented Generation). This requires a Vector Database to store and search through millions of data embeddings.

At a small scale, the cost is a rounding error. But at enterprise scale, this becomes a massive infrastructure tax. Vector databases are notoriously memory-hungry. They live and breathe on high-performance RAM. As your document library grows, your RAM requirements grow with it, and your cloud bill follows suit.

Engineering Your Way Out of an AI Budget Crisis

You can’t just keep throwing hardware at a memory problem forever. To keep the system from eating your margins, you have to apply some actual engineering discipline. This is where Product Quantization (PQ) comes in.

Techniques like Product Quantization (PQ) and IVF indexing allow you to trade a small, controlled loss in search accuracy for a dramatic reduction in memory footprint, often 4x to 16x smaller. This is not lossless compression; it’s an engineered tradeoff. For most production RAG systems, the accuracy delta is negligible, but the cost savings are substantial.

- The Result: You slash your RAM consumption and stop the “linear cost growth” that usually kills RAG projects once they hit a certain volume.

MLOps is Not “DevOps Lite”

To run this at scale, your MLOps needs to be a core business function, not an afterthought. You need:

- Prompt Versioning: Because a single word change in a system prompt can completely change how your app behaves.

- Cost Tracking per Endpoint: So you can see exactly which feature is burning through your budget.

- Rollback Strategies: For when a “smarter” model update starts giving out “creative” but incorrect advice.

The Strategic Reality: Integration isn’t a one-time task. Between the RAM requirements of vector DBs and the complexity of embedding pipelines, you are building a living system that needs constant tuning. The teams that win are the ones who optimize early using techniques like Product Quantization to build a system that scales efficiently rather than just getting more expensive.

Latency as a Cost Driver: Response time is a hidden cost vector. A cheaper model that adds 6–8 seconds of latency may require you to implement semantic caching (tools like GPTCache or Redis with vector search) or streaming responses, both of which add infrastructure complexity and cost. Always benchmark cost-per-response alongside latency-per-response, not just price-per-token

How do Compliance, Security, and Ethics affect AI Costs?

Once an AI leaves the sandbox, it stops being a tech project and starts being a liability. The mistake most teams make is thinking legal and reputational risks scale linearly.

In regulated spaces like finance or healthcare, you should expect a “governance tax” of 10–20% on your total cost. It’s a bitter pill, but it’s the only thing keeping your company out of the headlines for the wrong reasons.

Privacy and Data Governance

You can’t just throw customer data at a model and hope for the best. You need real data residency controls and safeguards for PII. A single leak doesn’t just result in a fine; it’s a permanent hit to your brand’s trust. You need an audit trail that shows exactly who touched what, and when.

The “Black Box” Problem: Bias and Explainability

Regulators don’t care how “smart” your AI is if it’s biased or unexplainable. If your model makes a call on a loan or an insurance claim, you have to be able to show your work.

This requires constant bias testing and fairness audits. Building a framework that can actually trace a response back to its source is tedious and expensive, but it’s significantly cheaper than a class-action lawsuit.

New Ways to Break Things

AI opens up a whole new menu of security threats that a traditional firewall won’t catch:

- Prompt Injection: Malicious instructions embedded either directly in user input or indirectly inside external data sources (emails, documents, web pages) that cause the model to override its original instructions. Indirect prompt injection is especially dangerous in agentic systems where the AI reads and acts on external content autonomously.

- Data Poisoning: In fine-tuned systems, attackers corrupt the training dataset to manipulate model behavior. In RAG-based systems, the more common threat is knowledge base poisoning, injecting malicious documents into your vector database so the model retrieves and acts on adversarial information

- Model Extraction: Competitors essentially “steal” your logic by bombarding your API with queries to reverse-engineer the outputs.

Securing this stuff isn’t a one-time setup. It’s a constant cat-and-mouse game.

The Reality: You can’t bolt compliance on at the end. If security is an afterthought, you’ll eventually pay for it through emergency fixes or legal battles. Moving fast without governance isn’t a competitive advantage; it’s just a disaster waiting to happen.

Organizational Change: Technology Is Only Half the Equation

You can build the most advanced AI system in the world and still watch it fail. Why? Because AI success isn’t just a technical problem, it’s a behavioral one.

When you drop AI into a company, workflows don’t just “improve”, they break and reform.

Decision-making patterns shift, and roles start to look different. If you don’t manage that transition, you’re left with a team that is either confused, resistant, or both. And let’s be clear: resistance is a quiet ROI killer.

The Real Cost of Adoption

People tend to budget for the software, but they forget to budget for the people using it. There are very real, tangible costs here:

- The Learning Curve: The productivity dip that happens while everyone figures out how the new tools work.

- Redesigning Workflows: You can’t just “bolt on” AI to an old process; you usually have to rebuild the process around the AI.

- Trust-Building: If your team doesn’t trust the model’s output, they’ll just bypass it. They’ll keep doing things the “old way” while your expensive AI sits idle.

Why Resistance is Expensive

The biggest barrier to AI isn’t usually a lack of technical capability; it’s human hesitation. If your users ignore the outputs or revert to manual processes, your productivity gains vanish, and your investment loses its legs.

Don’t Just Launch; Lead

Leaders need to stop treating AI like a typical software rollout and start treating it like a fundamental shift in how the business operates. This means:

- Role-Specific Training: Don’t give everyone the same “intro to AI” deck. Show them exactly how it changes their specific Tuesday morning workflow.

- Executive Sponsorship: If the leadership isn’t using it, nobody else will.

- Adoption Metrics: Stop tracking “features shipped” and start tracking “features actually used to solve a problem.”

The Reality: AI transformation isn’t a tech project; it’s an operating model shift. If your people aren’t moving with the technology, they’re being dragged behind it. And it’s a lot more expensive to drag people than it is to lead them.

Read our blog AI Roles Explained

Sustainability and Energy Use: The Environmental Cost Behind the Compute

AI doesn’t just burn through tokens; it burns through electricity.

Most people think of AI as something “in the cloud,” which makes it feel weightless. In reality, it runs on massive banks of GPUs and high-memory servers that are incredibly power-hungry.

As your usage scales, you aren’t just increasing your cloud bill, you’re significantly expanding your carbon footprint. This is a hidden operational cost that usually doesn’t get a spotlight until the ESG (Environmental, Social, and Governance) report is due.

Model Size is a Direct Energy Impact

It’s a simple equation: the bigger the model, the higher the energy bill.

- GPU Cycles: Massive models require more “juice” to process every single request.

- Infrastructure Overhead: It’s not just the chip; it’s the cooling and the massive distributed systems required to keep those chips running 24/7.

When you’re running a few thousand queries a day, it’s a rounding error. But when you hit millions of queries, that energy consumption multiplies. Your cloud provider might manage the hardware, but you are the one paying the premium for that power.

The ESG Pressure

If your company has made public commitments to sustainability or carbon neutrality, AI is your new biggest hurdle. Investors and boards are starting to ask the hard questions:

- Value vs. Volume: Is this specific AI feature worth the energy it takes to run it?

- Efficiency as a Strategy: Can we achieve the same result with an 8B-parameter model instead of a 70B one?

The Reality

Scaling AI without an efficiency plan leads to a “double hit”: your cloud costs spiral out of control just as your carbon footprint starts looking like a liability.

The Bottom Line: Efficiency in AI isn’t just about saving money anymore; it’s about strategic accountability. Organizations that win won’t just have the “smartest” AI; they’ll have the most efficient architecture, focusing on model optimization and cutting out the low-ROI features that are just burning power for the sake of it.

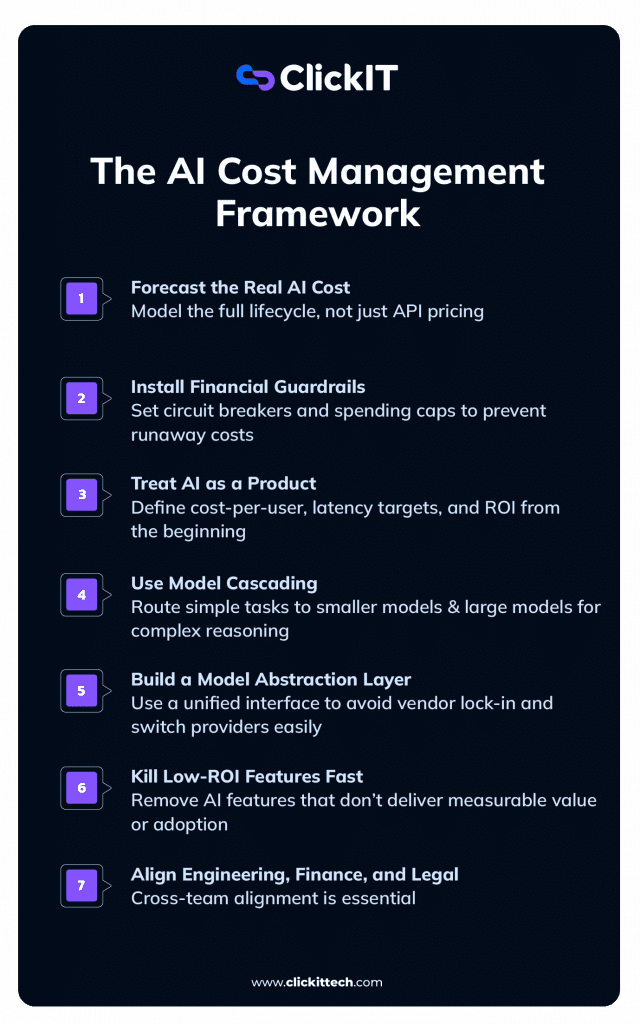

The AI Cost Management Framework: Moving from Hype to Financial Discipline

AI initiatives rarely fail because the models are too expensive. They fail because cost control wasn’t baked into the architecture on Day One. To move from reactive spending to proactive governance, you need a framework that treats AI like a core business function, not a high-priced science experiment.

- Forecast the “Real” TCO Early

Stop looking only at the API pricing page. If you aren’t modeling the full lifecycle, data prep, the heavy RAM requirements for vector databases, and that 20% annual maintenance “tax”, you aren’t seeing the real numbers. AI is a living operational system, not a one-time software build. - Install “Financial Seatbelts”

AI agents are notorious for getting stuck in infinite loops. A minor bug in a recursive prompt can drain a monthly budget in a single weekend.- The Fix:

Implement circuit breakers and hard spending caps at the API key level. These aren’t just “safeguards”; they are non-negotiable insurance against a catastrophic cloud bill.

- The Fix:

- Treat AI as a Product, Not a Prototype

If you treat AI like a side experiment, the costs will stay as unpredictable as R&D. Define your cost-per-user, your latency benchmarks, and your ROI expectations immediately. When you treat AI as a product, the financial discipline follows naturally. - Master the Art of Model Cascading

Don’t use a Ferrari to deliver the mail. Every routing decision is a margin decision. Use the smallest, most efficient models (SLMs) for 80% of your grunt work, classification, extraction, and basic chat, and save the expensive “Frontier” models for the 20% that actually require deep reasoning. - Build an Abstraction Layer

Don’t let yourself get backed into a corner by vendor lock-in. By using an abstraction layer like LiteLLM, an open-source proxy that provides a unified API interface for 100+ LLM provider, you can swap between OpKill Low-ROI Features Fast

“AI sprawl” is a silent budget killer. If a feature isn’t showing measurable value or hitting its adoption targets, sunset it. Not every experiment deserves to live in production, where it can eat up infrastructure and governance overhead. - Get Finance and Legal in the Room Early

AI isn’t just an engineering decision; it’s an enterprise decision. Cross-functional alignment ensures that your cost forecasting is grounded in reality and that you aren’t building something your legal team will force you to shut down six months later.

AI doesn’t become dangerous because it’s powerful; it becomes dangerous when it’s unmanaged.

The Hidden Costs of AI rarely show up during the honeymoon phase of a demo. They surface in year two; inside bloated vector databases, unplanned retraining cycles, and cloud bills that grow faster than your revenue. At that point, what started as “innovation” can quickly start to feel like operational drag.

The Hidden Costs of AI aren’t accidents or “bugs.” They are the structural reality of moving intelligent systems into the real world. When leaders ignore lifecycle budgeting, validation layers, and cost routing, they aren’t just building a feature; they are creating a fragile system that will eventually eat their margins.

The organizations that actually win are the ones that acknowledge The Hidden Costs of AI Systems upfront. They are the teams that forecast the real TCO early, install financial circuit breakers, and build enough flexibility into their stack to swap models as the market shifts. They stop treating AI as a flashy add-on and start treating it as core infrastructure.

In the end, AI won’t reward speed alone; it rewards discipline. Intelligence without financial governance is just an expense. Intelligence with architectural rigor is a competitive advantage.

FAQs

If you’re only looking at the API price tag, you’re missing the biggest part of the invoice. The Hidden Costs of AI are buried in the “plumbing”: the hundreds of hours spent cleaning and labeling data, the high-performance RAM required for vector databases, and the constant energy consumption of large-scale compute.

Then there’s the “governance tax”, the 10-20% extra you’ll spend on security and compliance in regulated fields. Maintaining a system that is probabilistic and “fuzzy” is simply more expensive than running traditional, predictable code.

Because the Production AI is a living system that begins to “decay” the second it hits the real world. Once you launch, you’re fighting data drift (where incoming data changes) and concept drift (where business contexts shift), all while your library of embeddings expands.

The Hidden Costs of AI Systems compound over time because as your user base grows, so does the need for oversight and validation. If you didn’t build cost controls into the architecture from day one, you’re running a system that gets more expensive and less efficient every day.

In a word: Yes. One of the most dangerous myths is that AI is a “set it and forget it” technology. In reality, The Hidden Costs of AI include the non-negotiable need for ongoing upkeep, monitoring performance, retraining models, updating prompts, and running compliance audits.

If you don’t set aside that 15-20% annual buffer for maintenance, you aren’t actually saving money; you’re just deferring a much larger bill for a crisis intervention when the system eventually breaks.