Nearly three in four CIOs regret a major AI vendor or platform decision made within the past year. The pattern is consistent: impressive demos lead to rushed implementations, which reveal infrastructure weaknesses nobody anticipated until production.

The decisions that seem reasonable during evaluation, locking into a single LLM provider for better pricing, skipping MLOps to move faster, and choosing platforms based on pilot performance, become the constraints that block scale later.

This guide breaks down the AI stack mistakes CTOs regret most, why they happen, and how to audit your current architecture before small shortcuts become expensive problems.

Why most CTOs regret their AI stack decisions

An AI stack is the combination of AI/ML frameworks, platforms, data infrastructure, and tooling that powers an organization’s AI initiatives.

CTOs frequently regret AI stack decisions that favor trendy technology over business logic, and the pattern follows a predictable cycle: excitement during impressive demos, rushed implementation under competitive pressure, then production failures that reveal infrastructure weaknesses nobody anticipated.

The regret typically stems from three compounding factors.

- First, demos showcase AI capabilities under ideal conditions with clean data and limited scale, which masks the infrastructure requirements for real-world performance.

- Second, AI vendor marketing creates urgency that accelerates purchasing decisions before teams fully evaluate long-term implications.

- Third, without clear oversight structures, bad choices compound as teams adopt additional tools without coordination.

What makes AI stack regret particularly painful is how quickly it accumulates. A platform decision made in Q1 shapes every subsequent technical choice, and by Q3, teams discover constraints they cannot easily reverse.

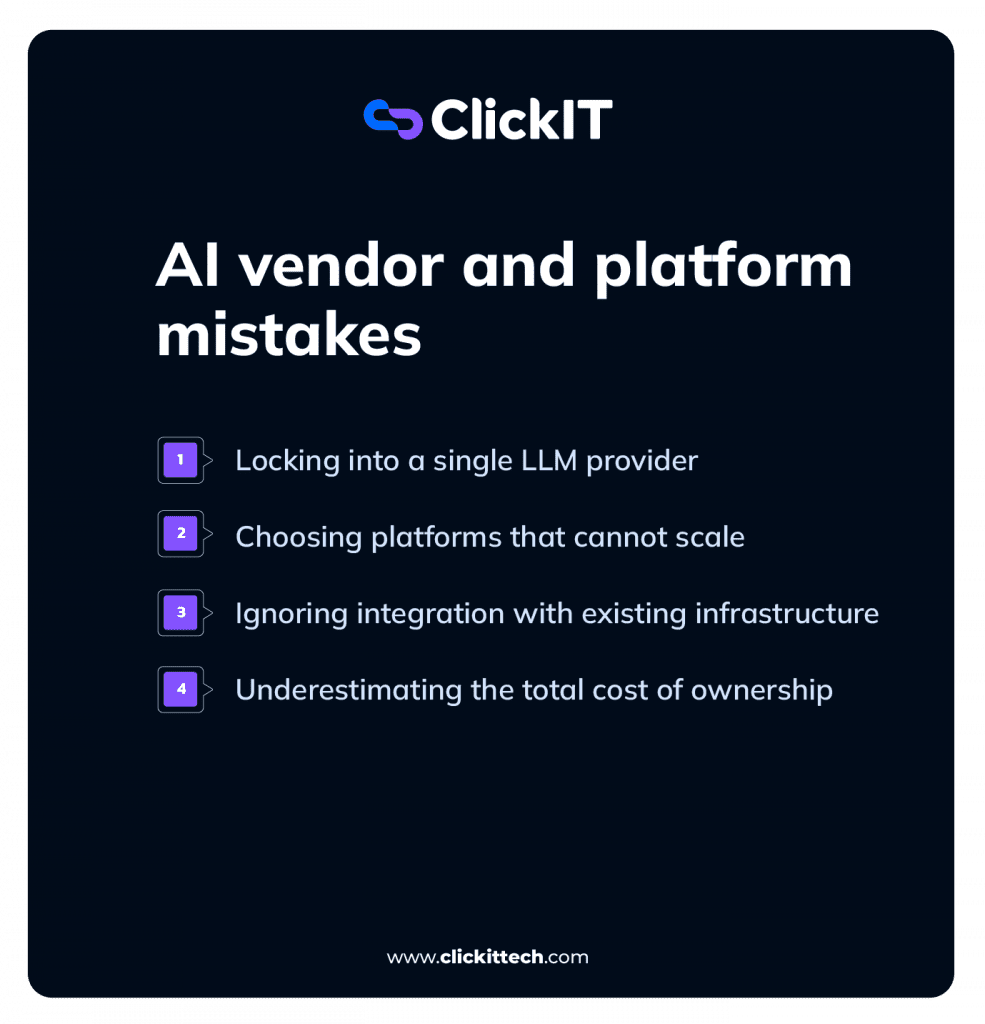

AI Stack Mistakes about Vendor and Platform

Vendor and platform decisions carry the highest stakes because they determine what becomes possible, and what becomes difficult for years afterward.

Many of these decisions look reasonable during evaluation but create constraints that surface only after significant investment.

AI Stack Mistakes: Locking into a single LLM provider

Betting on one large language model provider limits flexibility as the AI landscape evolves. Model capabilities change rapidly, and what performs best today may fall behind within months as competitors release updates.

Organizations locked into single providers face difficult choices when better alternatives emerge: either accept inferior performance or undertake expensive migrations.

The challenge is that LLM providers often offer attractive pricing and integration support in exchange for exclusivity. That trade-off looks appealing during procurement but becomes a liability when the market shifts.

Read our blog LLM Cost Optimization

Choosing platforms that cannot scale

Platforms that work beautifully for proofs of concept often fail at production volume. Scalability issues typically surface only after teams have invested months of development work, when they discover that response times degrade under real user loads or costs spike unexpectedly.

A platform handling 100 requests per day during a pilot may struggle with 10,000 requests per day in production.

The architecture that seemed adequate for experimentation cannot support the throughput, latency, or reliability requirements of actual business operations.

Ignoring integration with existing infrastructure

AI platforms rarely operate in isolation. They connect to existing data systems, DevOps pipelines, and security controls. Siloed AI tools that cannot integrate with current infrastructure create operational chaos and duplicate data management overhead.

- Data synchronization: AI systems that cannot access real-time data from existing warehouses produce stale or inconsistent outputs

- Authentication and access control: Standalone AI tools often require separate user management, creating security gaps and administrative burden

- Monitoring and alerting: AI platforms disconnected from existing observability systems make it harder to detect and diagnose problems

Underestimating total cost of ownership

Initial pricing rarely reflects production reality. Hidden costs accumulate across compute resources, data storage, model retraining, and specialized talent.

Many CTOs discover their AI investments cost three to five times the original estimates once systems reach production scale.

| Cost category | What vendors quote | What production requires |

| Compute | Base API pricing | Peak load capacity, redundancy |

| Storage | Initial data volume | Historical data, model versions, logs |

| Talent | Implementation hours | Ongoing maintenance, optimization |

| Training | Initial model setup | Continuous retraining, fine-tuning |

Early architectural shortcuts that become constraints

Technical debt in AI systems compounds faster than in traditional software. Architectural decisions that seem reasonable for speed often block scale later, and the cost of fixing them grows with each passing month.

Skipping MLOps infrastructure

MLOps refers to the practice of deploying, monitoring, and managing ML models in production. Teams frequently skip MLOps setup to accelerate initial development, then face deployment bottlenecks when models need to move beyond experimentation.

Without proper MLOps, even well-performing models cannot reach users reliably. Teams end up with manual deployment processes, no version control for models, and no systematic way to roll back when something goes wrong.

The time saved by skipping MLOps early gets repaid many times over in deployment delays later.

Building data pipelines without governance

Ungoverned data pipelines lack lineage tracking, quality checks, and access controls. Poor data foundations make AI outputs unreliable and difficult to debug.

When models produce unexpected results, teams without data governance cannot trace problems to their source.

- Was the issue in the training data?

- The feature engineering?

- The data transformation logic?

Without lineage tracking, answering these questions requires manual investigation that can take weeks.

Hardcoding models instead of using abstraction layers

Model abstraction separates application logic from specific AI models. Hardcoded integrations make swapping models painful, sometimes requiring months of rework to replace a single component.

Consider an application that calls a specific LLM API directly throughout its codebase. When a better model becomes available, developers face a choice: rewrite every integration point or accept inferior performance.

Abstraction layers allow teams to upgrade or switch models by changing configuration rather than rewriting applications.

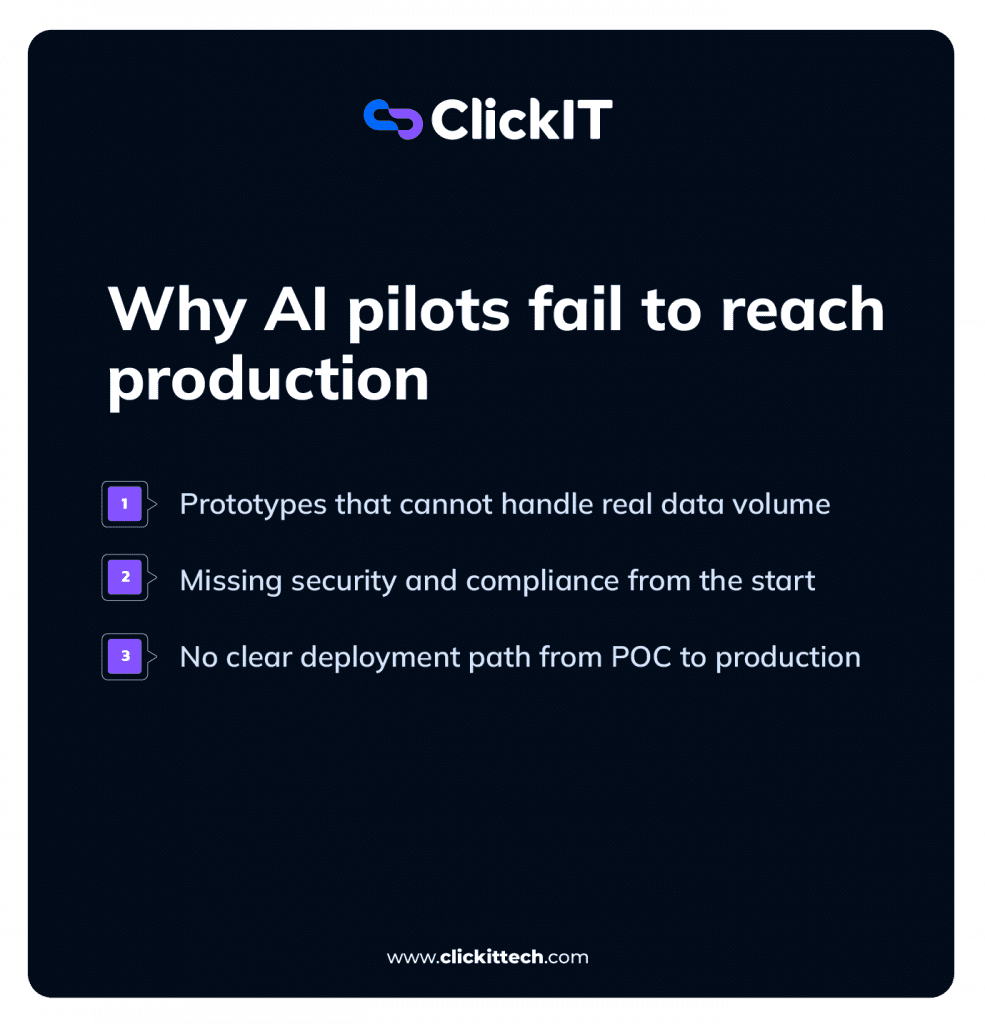

Why AI pilots fail to reach production

Most AI initiatives never leave the experimental phase. The gap between a working prototype and a production system is wider than many teams anticipate, and several common patterns explain why pilots stall.

Prototypes that cannot handle real data volume

Pilot data is often clean and small, while production data is messy and massive. Performance that looks promising during testing degrades when scale increases and data quality varies.

A model trained on 10,000 carefully curated examples may struggle with millions of real-world records containing missing values, inconsistent formatting, and edge cases nobody anticipated.

The prototype worked because the data was artificially clean, not because the architecture was sound.

Missing security and compliance from the start

Pilots built without security controls encryption, access management, audit trails cannot meet enterprise requirements. Retrofitting compliance into an existing system is expensive and sometimes requires complete rebuilds.

Security and compliance considerations that often get deferred include:

- Data encryption: Both at rest and in transit

- Access logging: Who accessed what data and when

- Model audit trails: Which model version produced which output

- Data residency: Where sensitive information is processed and stored

No clear deployment path from POC to production

Data science experimentation and engineering-ready deployment require different capabilities. Teams that build pilots without considering deployment pipelines, monitoring, and rollback capabilities often find their prototypes cannot transition to production without significant rearchitecting.

The data scientist who built the model may not have considered how it will be containerized, how it will handle concurrent requests, or how it will fail gracefully when dependencies become unavailable.

These concerns feel premature during experimentation but become blockers during deployment.

AI talent and team structure decisions CTOs regret

People-related decisions create regrets that are harder to reverse than technology choices. Hiring mistakes and team structure problems slow AI initiatives more than technical challenges, and the effects ripple through organizations for months or years.

Hiring for stack fit instead of adaptability

Hiring engineers for specific AI frameworks backfires when technology evolves. Adaptability and strong fundamentals matter more than tool-specific experience, yet job descriptions often prioritize the wrong criteria.

An engineer who deeply understands machine learning principles can learn a new framework in weeks. An engineer who only knows one framework’s API may struggle when that framework falls out of favor or when the organization’s requirements change.

Building in-house when AI expertise is scarce

Recruiting AI talent in a competitive market takes longer than most timelines allow. The opportunity cost of waiting to build internal teams, while competitors move forward often exceeds the cost of partnering with specialists who can deliver immediately.

Building an internal AI team from scratch typically takes 6-12 months before meaningful output begins. During that time, market conditions change, competitive pressure increases, and the original business case may no longer apply.

Rushing hires under delivery pressure

Urgent timelines lead to poor vetting. Bad AI hires slow teams more than open positions because they consume management attention, introduce technical problems, and affect team morale.

A rushed hire who lacks the skills to contribute independently requires constant supervision from senior team members. The net effect is negative productivity: the team moves slower with the bad hire than it would have with the position unfilled.

Governance gaps and shadow AI risks in the enterprise

Shadow AI refers to unapproved AI tools adopted by teams without IT oversight. Organizational governance failures create risks that compound over time and become increasingly difficult to address.

Ungoverned AI tool adoption across teams

Business units adopt AI tools independently, creating security risks and data fragmentation. Without visibility into what tools teams are using, organizations cannot manage risk or ensure compliance.

Marketing may be using one AI writing tool, sales another, and customer support a third. Each tool has different data handling practices, different security postures, and different terms of service. The organization has no consolidated view of where its data is going or what risks it has accepted.

Missing accountability for AI investment decisions

Without clear ownership for AI outcomes, failed investments repeat. Teams launch initiatives without defined success criteria, and no one bears responsibility when projects stall.

When everyone is responsible for AI success, no one is responsible. Projects drift without clear direction, resources get allocated without strategic alignment, and the same mistakes recur across different teams.

Compliance blind spots in AI workflows

Regulatory requirements that AI workflows must meet HIPAA, SOC 2, PCI, often surface late in development. Key compliance considerations include:

- Data residency: Where AI models process and store sensitive data

- Model explainability: Ability to explain AI decisions for regulatory audits

- Access controls: Who can train, deploy, and modify AI systems

- Data retention: How long AI systems keep input data and outputs

What successful AI stacks get right

Organizations that avoid common regrets share several architectural and organizational characteristics. The patterns are consistent across industries and company sizes.

Modular architecture with swap-out capability

Successful AI stacks allow component replacement without system-wide rewrites. Abstraction layers and well-defined APIs enable teams to upgrade individual pieces as better options emerge.

Built-in observability and monitoring

Observability means tracking model performance, data drift, and system health in production. Monitoring prevents silent failures where models degrade without anyone noticing until users complain.

Clear ownership and governance from day one

Accountability structures established before deployment prevent the confusion that derails projects. Someone owns each AI initiative’s success, with authority to make decisions and responsibility for outcomes.

Production-ready security and compliance controls

Embedding security encryption, access management, audit logging into the initial architecture avoids expensive retrofitting. Compliance becomes a design constraint rather than an afterthought.

How to audit your current AI stack for future risks

Proactive assessment identifies problems before they become expensive to fix. Four areas warrant regular evaluation:

- Vendor lock-in exposure: Evaluate how dependent systems are on specific vendors and estimate exit costs and migration difficulty

- Scalability bottlenecks: Stress-test current architecture against projected growth and identify single points of failure

- Compliance and security gaps: Audit data flows, access controls, and regulatory alignment across AI systems

- Technical and reliability debt: Inventory accumulated shortcuts that reduce system stability and prioritize remediation

Why CTOs who partner with AI experts early avoid regret

Learning through costly mistakes is one path forward. Partnering with experienced AI development teams is another.

Organizations that engage specialists early skip the learning curve on MLOps and deployment, work with teams experienced in HIPAA, SOC 2, and PCI requirements, and scale expertise based on project phase rather than committing to permanent headcount.

FAQs about AI stack decisions CTOs regret

Replacement costs include retraining models, migrating data, rewriting integrations, and retraining staff often exceeding the original investment by two to three times.

Single-vendor lock-in, skipping MLOps, and choosing platforms based on demo performance rather than production capabilities are the most common examples.

Embedding compliance requirements into AI architecture from the start prevents expensive retrofitting that can delay or derail production deployment.

Technical debt refers to code shortcuts that slow future development, while reliability debt describes accumulated stability risks. Both compound faster in AI systems than traditional software.